My first passion is making my own rendering engines.

Some games that I have made or am working on

A few cool apps I made

I work at Zynga! Check out our great games!

A WebGL point and click 3D escape room game, using my own engine.

A new game demo I'm working on where you design and manage escape rooms.

My own WebGL engine, in development

A ray tracer I wrote in a single GLSL shader.

Escape is my first WebGL escape room simulation. It's based on the old one room adventure games that we used to play back in the flash days. Originally made for the Oculus Devkit 1, it no longer works in VR but its still fun! Can you use the clues and figure out how to open the door?

This is a demo of an idea I had for a tycoon type game where you are in charge of designing and running escape rooms. AI customers will come and play your rooms. Can you keep the business afloat?

Launch Game DemoI've worked with DirectX for many years in C++, then with SharpDX in C# for Windows and XBox. Lately, with the advances of WebGL, I have changed my focus to be on online graphics rendering.

My custom pipeline is used to prepare 3D models into a proprietary format that my WebGl engine uses. It handles FBX files and textures. Plans are underway to handle IK and bones.

Launch PipelineI made this lighting tester so I can see what my models look like under actual WebGL lights.

Launch Lighting TesterMXFrame is the third generation of my graphics engine for WebGL, based on earlier DirectX versions.

MXFrame repositoryI picked up the book 'The Ray Tracer Challenge' and thought I would try to make a ray tracing engine that ran in real time as an add on to my WebGL engine.

The result is a fairly massive shader that I optimised down enough to actually perform well at real time. I was also able to make it work with a physics engine.

I'm currently trying to figure out how to get caustic light effects to work.

Launch Real Time Ray TracerFirst, I write the ray tracing code in pure javascript to get the math right, then I port it to GLSL and optimize. Here you will find the javascript version that I use for testing.

Ray Tracing testbed

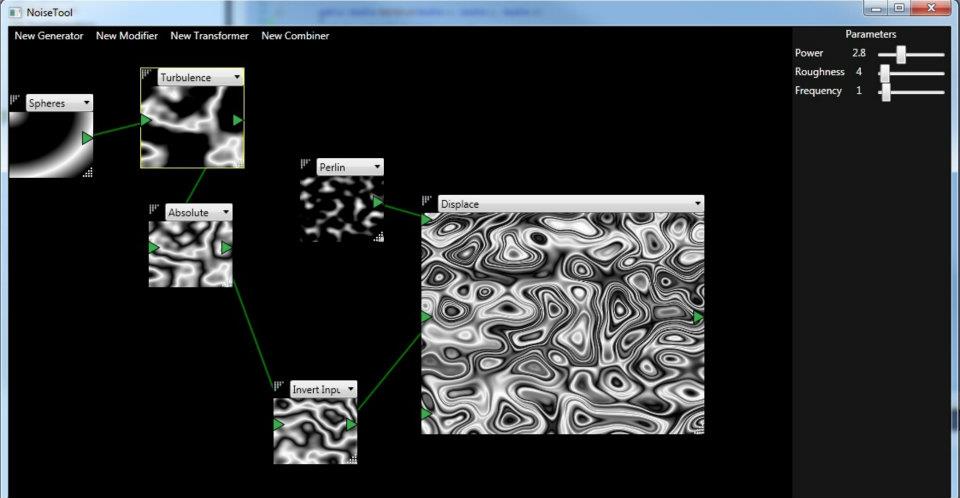

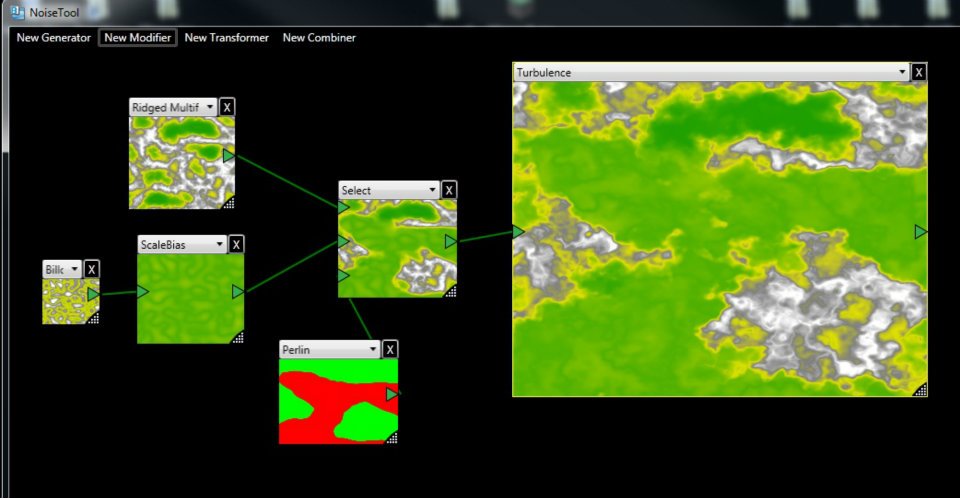

NoiseTool is a front end for the LibNoise library that lets you manipulate noise modules and view their output in realtime, by connecting outputs to sources. It can apply colour gradients to the data to create bitmap image representations, much like the original noise library utils can do.

I use this noise library a lot to create heightmaps, voxel maps and procedural textures, and I got tired of playing with numbers in code and not really getting to see what it is that the numbers are doing without rendering the whole scene in game. I created this tool to help me see exactly what types of images I will get, if certain noise modules are connected a certain way.

I have been programming for as long as I can remember, and doing it professionally since 1996. I am mostly self taught, having discovered C in highschool on my own before studying Comp Sci in university, but am always learning and reading about new stuff to get better. I am very interested in procedural algorithms and genetic software that can evolve itself. Since I don't have time for making game levels or scripting AI, its easier if the game can do that itself!

I've been trying to write my own games since grade 8 with varied success. Most games are nothing more than a tech demo that never goes anywhere but its all important steps in learning. I don't like using game engines and prefer to discover how to do everything myself from scratch. Over the last decade I've taught myself DirectX, HLSL, XNA, and WebGL programming, as well as PhysX and other physics engine SDKs.

The name Madox come from the japanese animation 'Madox-01 Metal Skin Panic'.

I am unrelated to The Best Page in the Universe.